SSHOC Webinar “Quanlify with ease: Combining quantitative and qualitative corpus analysis”

Thursday, April 16, 2020

11:00 AM – 12:00 PM

Application is available here.

- Learn about the conceptual reasoning to combine quantitative and qualitative research practice when working with large-scale corpora.

- Discover a novel approach to how graphical user interfaces and dashboards that implement “quanlitative” workflows can be assembled using the modular design of the “quanlify”framework.

- Discuss how implementing workflows that combine quantity and quality can become as accessible as possible for text-oriented researchers without advanced programming skills.

Digital Diplomatics: a new range of interpretation of historical documents

Historical seminar of Scientific Research Centre of Slovenian Academy of Science and Arts has in collaboration with Postgradual School ZRC SAZU organised a lecture by prof. dr. Georg Vogeler with the title

Digital Diplomatics: a new range of interpretation of historical documents.

Lecture was held on Tuesday, May 16th 2017 at the premises of ZRC SAZU in Ljubljana.

A video of the lecture, which is in English, is available at the link.

As an old auxiliary historical discipline (introduced by Mabillon in the 17th century), diplomatics has a clearly defined scope and subject: the study of official historical documents (charters) as reliable sources, since they contain distinct information on the place and date of creation. Therefore, these documents are relevant not only for historical research, but also for linguistics and other disciplines that deal with historical facts. But the documents do not always tell the truth. Diplomacy developed its basic methodology precisely to identify fakes. Since the birth of diplomacy, her research interests have developed in particular in the direction of cultural history (literacy, symbolic communication, etc.). With the development of computer technologies, the modern branch has also developed: “digital diplomatics”. The lecture will show what this research area covers and how it affects the research interests of diplomatics. This example can serve as an example, in which we better understand what happens if digital tools are applied to research objects of humanists.

Georg Vogeler works as a professor of auxiliary historical sciences and their use in digital humanities at universities in Graz and Munich. He was one of the founding members of the research institute Institut für Dokumentologie und Editorik for the development of digital methods in the field of scientific texts, especially historical documents. Since 2006, he has been the technical director of the consortium monasterium.net, which includes over 500,000 documents from the Middle and Early Modern Centuries. Since 2007, monasterium.net has been involved in the ICARUS consortium, Vogeler carries out the tasks of a technical director for the entire consortium. He led or co-organized a series of symposia and summer schools on the subject of digital humanities, in particular a series of international symposia Digital Diplomatics.

XML-TEI Markup Language in the Humanities

Introductory workshop on Digital Humanities

The workshoop took place on October 15th 2014 in Prešeren hall at the Slovene academy of Sciences and Arts in Ljubljana.

Videos are in the Slovenian language.

Introduction to XML and TEI

Tomaž Erjavec

Introductory lecture presented the basics of XML markup standard. We looked at the structure of documents and tagging model in XML and briefly discussed the character encoding with an emphasis on standard Unicode. XML schemas, which enable the formal definition of grammar and a set of markings for a particular type of document, were presented in the follow-up. In the second part of the lecture, we learned of Text Encoding Initiative. The guidelines define a system for building XML schema and document in detail more than 500 elements that TEI provides for the marking of very diverse types of texts and for different analytical treatment. The motive for the establishment and historical overview of the TEI and the main advantages of using the TEI Guidelines for Electronic Text Encoding and Interchange were given at the end.

Introduction to TEI

Matija Ogrin

The TEI Consortium Guidelines try to accommodate the diverse needs of humanists whose main object of study is text. The Guidelines set out a comprehensive set of XML tags, which can be used to mark (encod) diversified structure of humanities texts. Symbols are grouped into modules for the various areas of work with texts. In this lecture we will learn about the general structure prescribed for TEI documents, and most importantly, the modules e used by humanists when work with text.

User case: description of the manuscripts

Matija Ogrin

Manuscripts represent one of the most important segments of cultural and especially literary heritage, which is the reason that electronic databases are being created, presenting detailed descriptions of the manuscripts together with a digital facsimile of the original. TEI Guidelines contain a special module fort his particular field. This lecture presents various opportunities from less to more complex labeling enebled by TEI guidelines.

User case: biographical and prosopographical data

Petra Vide Ogrin

TEI Guidelines contains a special module for biographical and prosopographical data, which can be found in the archival regestae, prosopographies and especially in the lexicographical publications. Labeling of the biographical data, used at the web portal Slovenian biography (containg 3 lexicons: Sklovenian biographical lexicon (1925-1991), Slovene biographical lexicon of the Littoral (1974-1994) and the New Slovenian biographical lexicon (2013), was eneblad using these guidelines. This presentation describes the use of the TEI mark-ups for detailed labeling of the personal and variant names, titles and nobility predicates, place names, dates, occupations, family ties and their peculiarities.

User case: digitally born and structured data

Andrej Pančur

TEI Guidelines were originally created to mark-up digitised printed data of the analogue text, but they are used to label digitally born text, including scientific publications, in the last years. This presentation is going to adress the strengths and weaknesses of electronic publishing in the humanities using TEI Guidelines compared to some others in the publishing industry widespread markup languages (DocBook, XHTML, HTML5). In addition, we have shown how is it possible to include structured data from relational tables and databases into original digital text.

User case: Linguistically annotated corpora and dictionaries

Tomaž Erjavec

Computer text corpora form the basis for the empirical study of language, both in basic linguistic research as in applied linguistics, lexicography in particular. Guidelines TEI have a special module to record the corps and an additional module for the linguistic tags that you can add to text, which make the corpus much more useful. In this lecture we will look at some examples of linguistically labeled corpus of Slovenian language, and then record the cases of dictionary data for which guidelines also offer a separate module.

Copyright in digital world

In the time when digital technology and global communication network enable rapid reproduction and dissemination of all sorts of content, significant progress of the “knowledge society” is expected, especially because of the new possibilities to offer content online with the goal to create and disseminate knowledge.

But we run into troubles very quickly. Technology enables almost everything, while the law prohibits almost everything.

How do memory institutions cope with that problem? Which modern services can they offer to serve their goals?

That was the topic of the first workshop on copyright on 18th December 2012, led by dr. Maja Bogataj Jančič.

A vast quantity of questions crossed our minds at the first workshop, that is why dr. Maja Bogataj Jančič tried to answer 20 practical questions, which are common in our daily working routine. Workshop was organised on 17. July 2013 in the entrance hall of Scientific Research Centre of the Slovenian Academy of Sciences and Art.

TEI text mark-up in MS Word

After well visited workshop on digital humanities (XML-TEI mark-up language in Humanities) a practical workshop followed in organisation of DARIAH-SI. The workshop took place on the December 4th 2014.

Workshop was organised for researchers who would like to tag text with some basic TEI mark-ups (http://www.tei-c.org/index.xml), but are not familiar with XML mark-up language.

Andrej Pančur, who led the workshop, has presented:

- OxGarage – as format convertor,

- how to transform a MS Word DOCX document to TEI.

Participants also learned how to:

- edit MS Word document to get an optimal mark-up conversion to TEI document

- add new TEI marks by designating new MS Word.

In the final phase of the workshop followed a presentation of the DOCX to TEI to HTML converter custom made for the needs of Slovenian DH community (http://nl.ijs.si/tei/convert/).

Workshop was based on the practical user cases on available MS Word documents.

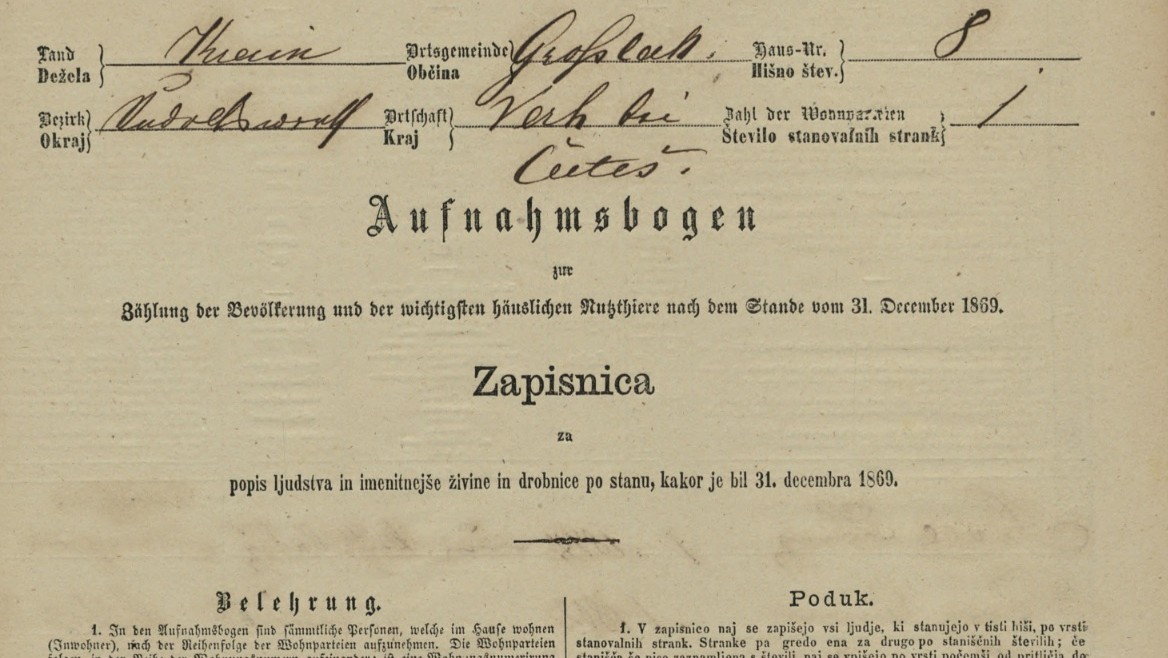

Presentation of online module Population Censuses of Slovenia 1830-1931

Andrej Pančur presented an online environment for digitised population censuses of Slovenia (1830-1931), which are one of the largest modules of the History of Slovenia-SIstory portal and important part of the national DH browser SI-DIH. Originally are this valuable archival sources part of the collections of the Ljubljana Historical Archive.

Pančur used practical cases to present different tools for browsing through population censuses data, newly developed transcription tools, which enable crowdsourcing and presented future development plans.